- SCREAMING FROG SEO SPIDER HOW MANY COMPUTERS SOFTWARE

- SCREAMING FROG SEO SPIDER HOW MANY COMPUTERS CODE

- SCREAMING FROG SEO SPIDER HOW MANY COMPUTERS TV

What are custom extractions?Ĭustom extractions are a set of Screaming Frogs SEO spider functions to extract explicit information from web pages.

SCREAMING FROG SEO SPIDER HOW MANY COMPUTERS SOFTWARE

The Screaming Frog SEO Spider software is a website crawler that improves onsite SEO by extracting and analyzing your website’s data using a graphical user interface (GUI).

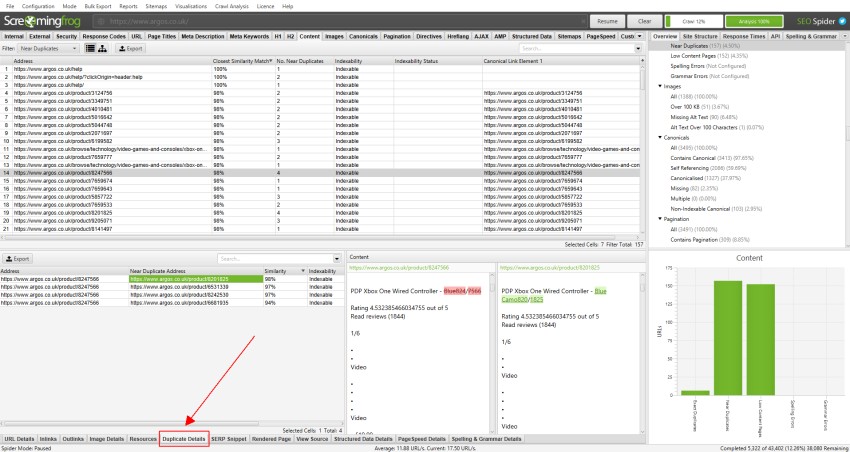

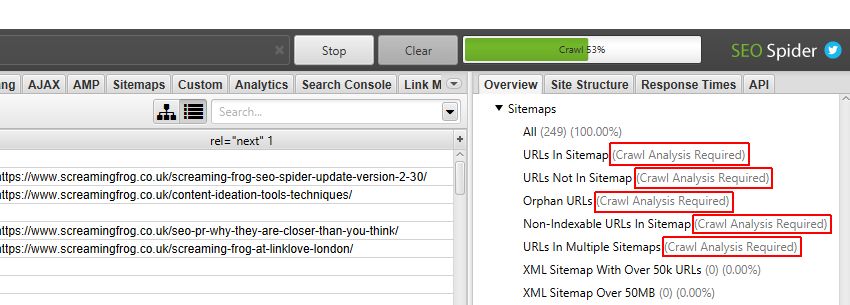

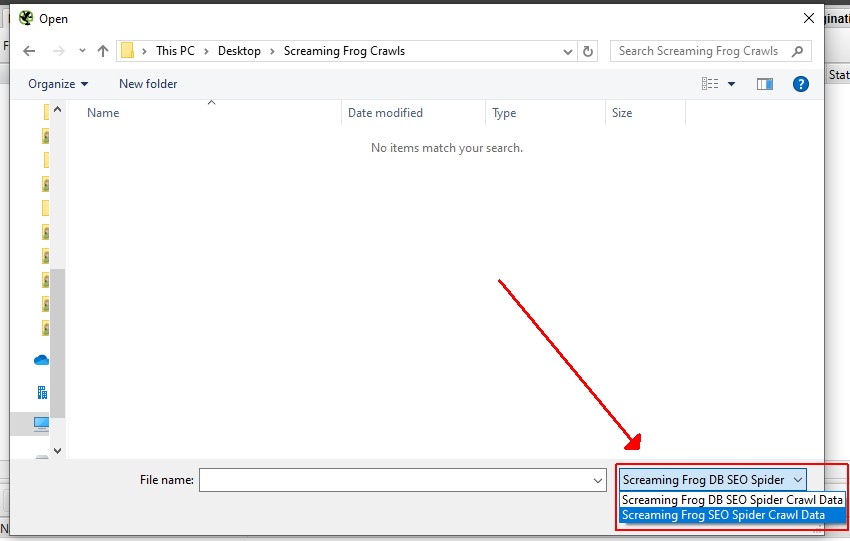

Custom extractions are a form of web scraping, web harvesting, or web data extraction used to scrape and extract data from websites, giving the ability to store it locally on your computer.įor beginners, some questions you might have: What is the Screaming Frog SEO Spider? Here is where Screaming Frog comes to the rescue with custom data extractions to automate the process. Websites have a ton of helpful information-most times, it’s too laborious or complicated to visit every page on a website to copy product data, metadata, title tags, anchor text into a spreadsheet. This blog post will discuss how Screaming Frog Custom Extraction works and why it can help improve your SEO efforts! One of the lesser-known features, Screaming Frog Custom Extractions, allows you to easily extract data from your crawls. However, this URL isn’t properly indexed by Google.Īnd – if you don’t believe that the screenshot above proves that Google isn’t always crawling JavaScript properly, let me show you one more example.Screaming Frog is a powerful SEO tool that has many features for search engine optimization. Let me show you an example – as you saw above, Screaming Frog properly crawled and rendered this URL. This is why so many JS websites are investing in prerendering services. However, Google doesn’t crawl JavaScript in the same way. Please keep in mind that the data you get from Screaming Frog is basically how correctly rendered JavaScript should look like. That’s – we are now successfully crawling JavaScript with Screaming Frog. If you already have Screaming Frog installed on your computer, all you have to do is go to Configuration → Spider → Rendering and select JavaScript and enable “Rendered Page Screen Shots.”Īfter setting this up, we can start crawling data and see each page rendered. Few people know that, since version 6.0, Screaming Frog supports rendered crawling. The simplest possible way to start with JavaScript crawling is by using Screaming Frog SEO Spider.

SCREAMING FROG SEO SPIDER HOW MANY COMPUTERS CODE

This is why crawling JavaScript websites without processing DOM, loading dynamic content, and rendering JavaScript is pointless.Īs you can see above, with JavaScript rendering disabled, crawlers can’t process a website’s code or content, and, therefore, the crawled data is useless. To see how the source code looked like before rendering, you need to use the “View Page Source” option.Īfter doing so, you can quickly notice that all the content you saw on the page isn’t actually present within the code. Instead, what you’ll see is DOM-processed & JavaScript Rendered code.īasically, what you see above is code “processed” by the browser. If you now use the right tools – e.g., the “Inspect code” feature in Google Chrome – you won’t see how it really appears.

SCREAMING FROG SEO SPIDER HOW MANY COMPUTERS TV

To make it even more specific, let’s have a look at the “Casual” TV show landing page –.

This is why crawling JavaScript is often referred to as crawling using “headless browsers.” Crawling JavaScript websites without rendering or reading DOMīefore moving forward, let me show you an example of a JavaScript website that you all know. The most straightforward tool we can use to see the rendered website is… a browser. Such a website has to be fully rendered, too, after loading and processing all the code. With JavaScript and dynamic content-based websites, a crawler must read and analyze the Document Object Model (DOM). With HTML (PHP, CSS, etc.) based websites, crawlers can “see” the website’s content by analyzing the code. However, to simplify this topic, let’s just say that it is all about computing power. The answer to this question is somewhat complex and could just as well be a separate article. Fortunately, there is more and more data, case studies, and tools to make this a little bit easier, even for technical SEO rookies. SEO for JavaScript websites is considered one of the most complicated fields of technical SEO.